Conversational AI is moving beyond “chatbots” and into a more serious role in banking: handling high-volume customer interactions, supporting staff, and automating routine service work without compromising compliance or trust.

This article defines conversational AI in a banking context, explains why it matters now, covers practical use cases and benefits, and highlights the most common deployment mistakes we see, plus how to avoid them.

Conversational AI in Banking, Defined

Conversational AI refers to systems that understand and respond to human language through chat or voice. In banking, it typically includes:

- Natural Language Understanding (NLU): Interprets customer intent, not just keywords.

- Dialogue management: Handles multi-step conversations such as verifying identity then completing an action.

- Knowledge access: Pulls accurate answers from approved sources such as product documentation, policy repositories, intranet content, or curated knowledge bases.

- Transaction support: Connects to banking systems through secure APIs to complete permitted actions (for example, card replacement requests or appointment booking).

- Human handover/handoff: Transfers context to an agent when the case is complex, sensitive, or requires judgement.

Well-designed conversational AI does not “make things up”. It operates within guardrails, relies on governed knowledge, and keeps an auditable trail.

Why Conversational AI in Banking Is Important

Banks face a familiar set of pressures: rising service costs, increased customer expectations, and tighter regulatory scrutiny. Conversational AI is important because it addresses all three, at scale.

Customers expect instant, always-on service

Customers are increasingly used to real-time digital experiences. They want quick answers, clear guidance, and simple actions without waiting in a queue.

Contact centres carry too much repetitive workload

A large portion of banking queries are routine: card issues, account access, payment status, limits, fees, branch details, and basic product questions. These can be automated safely when the experience is properly designed.

Banking is complex and heavily regulated

Unlike many industries, banking cannot afford casual automation. A conversational AI layer can actually reduce risk by standardising answers, enforcing approved wording, and ensuring consistent workflows.

Banks need to modernise without ripping out core systems

Conversational AI can sit on top of existing platforms and integrate through APIs. That means you can improve customer experience and operational efficiency without a full core banking transformation.

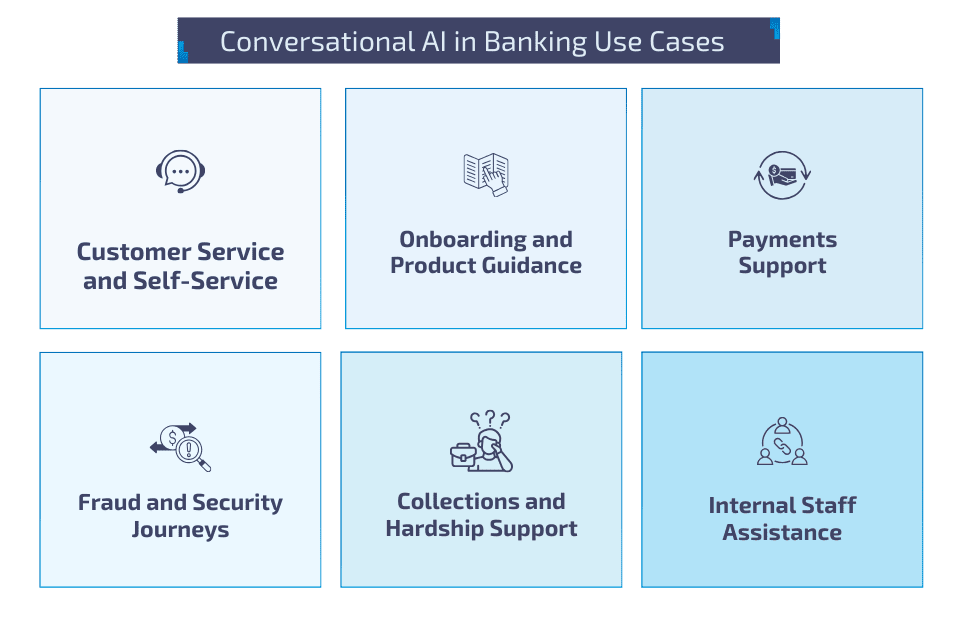

Conversational AI in Banking Use Cases

The best use cases share two traits: high volume and clear process boundaries. Here are the most common areas where conversational AI delivers value.

Customer service and self-service

Most banks begin with high-volume, low-risk journeys where customers simply want quick, accurate answers or straightforward service actions. This includes queries like fees and charges, card and account access support, branch or ATM information, and appointment booking. Deloitte’s research on banking chatbots highlights that customer experience can vary notably by segment, which is why these journeys work best when the assistant is designed for clarity, quick resolution, and easy escalation to a human when needed.

Onboarding and product guidance

Conversational AI can reduce drop-off by acting like a digital guide during onboarding and applications. Instead of forcing customers to hunt through FAQs, the assistant can explain eligibility, required documents, and next steps in plain language, then nudge users when they stall mid-journey. It can also support product discovery by helping customers compare account types or cards based on needs, then directing them into the appropriate application flow.

Payments support

Payment questions are high volume and often time-sensitive. A conversational AI layer can help customers check payment status, understand why a transfer failed, and follow guided troubleshooting steps before escalating. For more complex journeys like international transfers, it can walk users through required fields and common pitfalls, helping reduce errors that create repeat contacts later.

Fraud and security journeys

Fraud-related conversations are often urgent and emotionally charged, so conversational AI must be tightly controlled and designed around safe actions. It can help customers respond to alerts, freeze a card, secure an account, and understand what will happen next, while routing higher-risk scenarios to specialist teams. Reuters reported NatWest’s collaboration to enhance customer support and fraud prevention using AI, which reflects how banks are increasingly prioritising these journeys as a practical area for conversational capability, not just basic Q&A.

Collections and financial hardship support

This is an area where automation needs extra care, but conversational AI can still add value when it’s positioned as support rather than pressure. It can handle respectful reminders, explain available repayment options, capture key information and intent, and route customers to trained agents with the context already collected. The aim is to reduce friction and speed up access to the right support, while keeping tone, compliance, and customer wellbeing central.

Internal staff assistance

Some of the strongest early wins come from internal-facing assistants for agents and operations teams. Instead of searching across fragmented documentation, staff can ask questions and retrieve approved policy guidance, product details, and troubleshooting steps in seconds. When connected to case management workflows, the assistant can also suggest next actions, required checks, or relevant knowledge articles, helping reduce handle time and improving consistency across teams.

6 Benefits of Conversational AI in Banking

When deployed with the right governance and integration approach, conversational AI creates measurable outcomes across cost, experience, and risk.

1. Faster response times and improved customer satisfaction

Customers get immediate help for routine needs, including outside working hours. This reduces frustration and improves experience consistency.

2. Reduced cost to serve

Automation of repetitive interactions lowers the load on contact centre agents, allowing teams to focus on complex, high-value cases.

3. Better operational consistency

Conversational AI can standardise how information is delivered. That means fewer incorrect answers, fewer “it depends on who you speak to” outcomes, and fewer avoidable escalations.

4. Improved agent productivity

With strong handover design, agents receive conversation context, collected information, and suggested next actions. This reduces handle time and improves first-contact resolution.

5. Stronger compliance and auditability

Banks can enforce approved responses, retain conversation logs, and track which knowledge sources were used. This is essential for regulated environments.

6. Scalable rollout across channels

Once the core flows and knowledge model are proven, banks can scale across web chat, in-app chat, messaging platforms, and voice, depending on channel strategy and security constraints.

Common Deployment Mistakes and How to Avoid Them

Conversational AI fails in banking when it is treated like a quick UX add-on rather than a governed capability. Here are the mistakes that cause the most pain.

Treating it as a chatbot project, not a banking capability

Many programmes start as a quick digital initiative and never mature into a properly owned service channel. The assistant gets launched, but there is no clear operating model behind it: who owns the journeys, who signs off content changes, who monitors performance, and how incidents are handled. To avoid this, treat conversational AI like any other banking channel. Assign product ownership across business, technology, operations, and compliance, define SLAs and monitoring, and set up a continuous improvement loop that reviews failures, escalations, and customer feedback.

Weak knowledge governance that leads to inconsistent or outdated answers

In banking, “almost correct” is still risky. A common mistake is connecting the assistant to too many content sources without approval workflows, version control, or accountability for accuracy. Over time, answers drift, policies change, and customers receive conflicting information. The fix is to build a governed knowledge layer with approved sources, clear review and publishing workflows, and auditability. Your assistant should be able to explain where an answer came from, and your teams should be able to update content safely and quickly.

Over-automating sensitive journeys where judgement and empathy matter

Complaints, disputes, financial hardship, and complex fraud cases are not the place to chase high automation for its own sake. When banks attempt end-to-end automation in these areas, customers often feel trapped in scripted loops, and risk increases as nuance is missed. A safer approach is to automate triage and information capture, use early escalation triggers, and keep humans in the loop for sensitive or high-impact decisions. The assistant should support the journey, not force it.

Security and authentication designed too late

Teams sometimes build an assistant that looks great in demo environments but cannot do meaningful work in production because authentication is unclear, risk controls are missing, or channel permissions are not aligned with banking policy. The result is either an assistant that can only answer generic FAQs or one that creates security exposure. To avoid this, separate public information from account-specific actions, design authentication paths that match the channel, and integrate with your existing identity and access management approach from day one.

Poor handover design that frustrates customers and slows agents

A conversational AI experience fails quickly when customers cannot reach a human at the right time, or when agents receive no context and have to ask the same questions again. This increases handle time and damages trust. Strong deployments treat handover as a core feature: the assistant collects key details, captures intent, and transfers a clean summary and conversation history into the agent’s workflow. The customer should feel guided, not bounced.

Optimising for the wrong metrics, especially containment rate

Containment can look impressive on dashboards while hiding poor outcomes, such as customers giving up, repeating queries, or abandoning journeys. In banking, the goal is not simply to keep customers away from agents. It is to resolve needs safely and efficiently. Measure success with a balanced set of KPIs such as resolution quality, customer satisfaction, first-contact resolution, repeat contact rate, compliant behaviour, and escalation quality, alongside cost-to-serve improvements.

Underestimating integration, leading to “answers without action”

Many assistants can explain a process but cannot actually complete it. Customers get information, then still have to call or navigate multiple screens to finish the job, which creates repeat contacts and disappointment. The way around this is to prioritise “answer plus action” flows where risk is controlled, integrate with key systems through secure APIs, and expand iteratively. Even a small set of well-integrated actions often outperforms a large assistant that only talks.

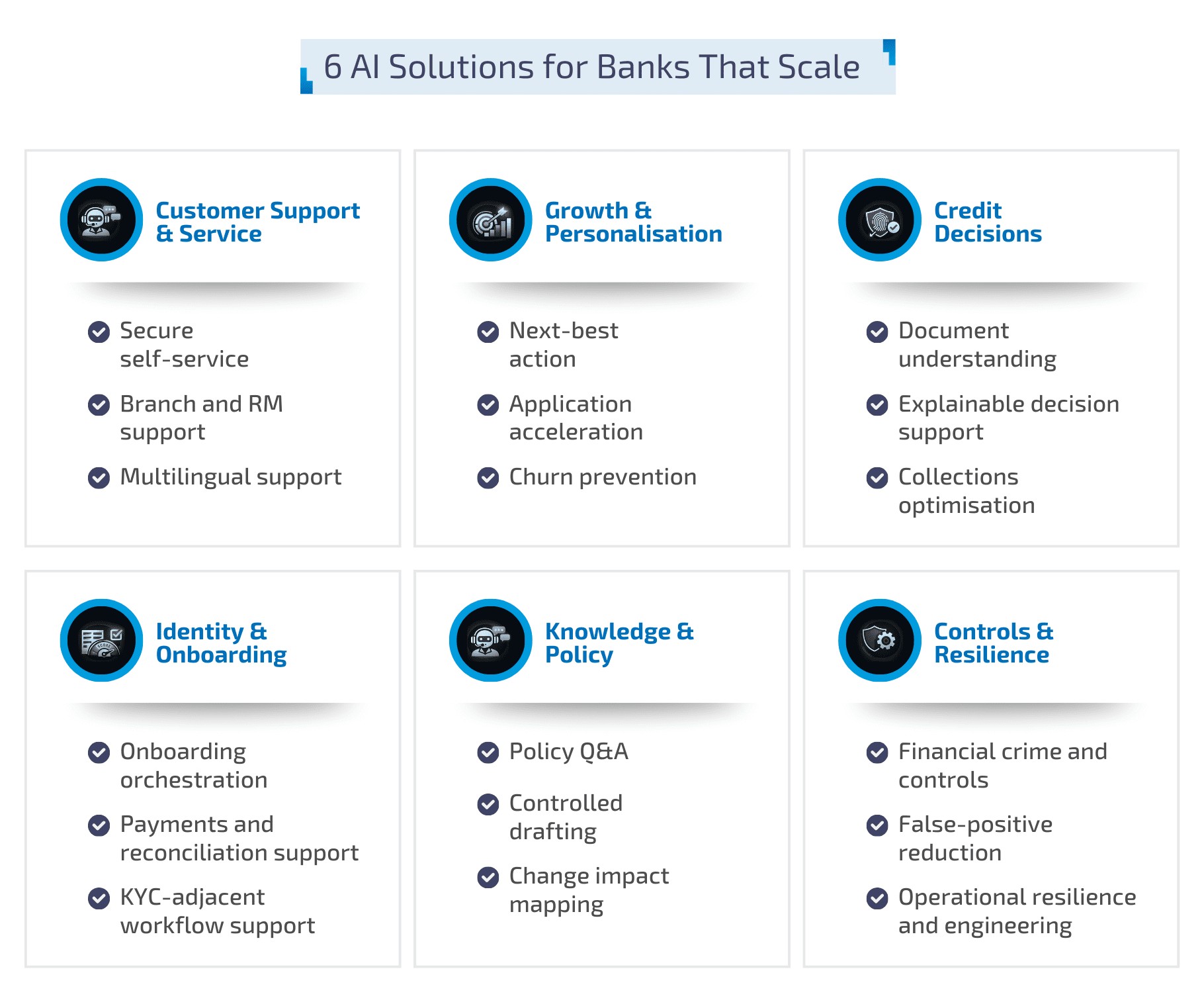

Unlock Conversational AI in Banking with BGTS

Conversational AI becomes valuable in banking when it is secure, compliant, integrated, and designed for real operations, not demos.

BGTS helps banks and financial institutions design and deliver conversational AI capabilities that fit regulated environments, connect cleanly to existing systems, and scale across channels with governance built in.

If you are exploring conversational AI, or looking to integrate AI more deeply into your existing systems, BGTS can help you shape the roadmap, implement the right architecture, and deliver measurable outcomes across service, cost, and customer experience. Get in touch to discuss your priorities.